Maximizing Machine Learning Accuracy with Video Annotation & Labeling :

A Comprehensive Guide

Picture says a thousand words is a fairly common saying we’ve all heard. Now, if a picture can say a thousand words, just imagine what a video can say. A million things, perhaps. One of the revolutionary subfields of artificial intelligence is computer learning. None of the ground-breaking applications we’ve been promised, such as driverless cars or intelligent retail check-outs, are possible without video annotation.

Artificial intelligence is used across several industries to automate complex projects, develop innovative and advanced products, and deliver valuable insights that change the nature of business. Computer vision is one such subfield of AI that can completely alter the way several industries that depend on massive amounts of captured images and videos operate.

Computer vision, also called CV, allows computers and related systems to draw meaningful data from visuals – images and videos and take necessary action based on that information. Machine learning models are trained to recognize patterns and capture this information in their artificial storage to interpret real-time visual data effectively.

Who is this Guide for?

This extensive guide is for:

- All you entrepreneurs and solopreneurs who are crunching massive amounts of data regularly

- AI and machine learning or professionals who are getting started with process optimization techniques

- Project managers who intend to implement a quicker time-to-market for their AI models or AI-driven products

- And tech enthusiasts who like to get into the details of the layers involved in AI processes.

What is Video Annotation?

Video annotation is the technique of recognizing, marking, and labeling each object in a video. It helps machines and computers recognize frame-to-frame moving objects in a video.

Engineers compiled the annotated images into datasets under predetermined

categories to train their required ML models. Imagine you are training a model to improve its ability to understand traffic signals. What essentially happens is that the algorithm is trained on ground truth data that has massive amounts of videos showing traffic signals, which helps the ML model to predict the traffic rules accurately.

Purpose of Video Annotation & Labeling in ML

Video annotation is used mainly for creating a dataset for developing a visual perception-based AI model. Annotated videos are extensively used to build autonomous vehicles that can detect road signs, pedestrians’ presence, recognize lane boundaries, and prevent accidents due to unpredictable human behavior. Annotated videos serve specific purposes of the retail industry in terms of check-out free retail stores and providing customized product recommendations.

It is also being used in medical and healthcare fields, particularly in Medical AI, for accurate disease identification and assistance during surgeries. Scientists are also leveraging this technology to study the effects of solar technology on birds.

Video annotation has several real-world applications. It is being used in many industries, but the automotive industry mainly leverages its potential to develop autonomous vehicle systems. Let’s take a deeper look at the main purpose.

Detect the Objects

Video annotation helps machines recognize objects captured in the videos. Since machines can’t see or interpret the world around them, they need the help of humans to identify the target objects and accurately recognize them in multiple frames.

For a machine learning system to work flawlessly, it must be trained on massive amounts of data to achieve the desired outcome

Localize the Objects

There are many objects in a video, and annotating for each object is challenging and sometimes unnecessary. Object localization means localizing and annotating the most visible object and focal part of the image.

Tracking the Objects

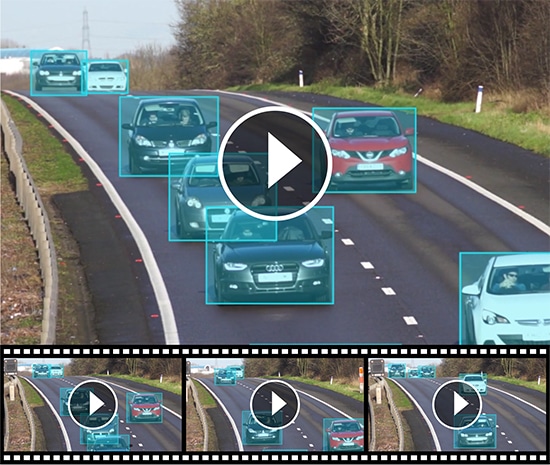

Video annotation is predominantly used in building autonomous vehicles, and it is crucial to have an object tracking system that helps machines accurately understand human behavior and road dynamics. It helps track the flow of traffic, pedestrian movements, traffic lanes, signals, road signs, and more.

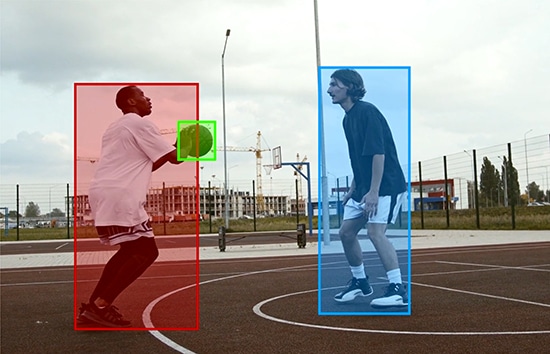

Tracking the Activities

Another reason video annotation is essential is that it is used to train computer vision-based ML projects to estimate human activities and poses accurately. Video annotation helps better understand the environment by tracking human activity and analyzing unpredictable behavior. Moreover, this also helps prevent accidents by monitoring the activities of non-static objects such as pedestrians, cats, dogs, and more and estimating their movements to develop driverless vehicles.

Video Annotation vs. Image Annotation

Video and image annotation are pretty similar in many ways, and the techniques used to annotate frames also apply to video annotation. However, there are a few basic differences between these two, which will help businesses decide the correct type of data annotation they need for their specific purpose.

Data

When you compare a video and a still image, a moving picture such as a video is a much more complex data structure. A video offers much more information per frame and much greater insight into the environment.

Unlike a still image that shows limited perception, video data provides valuable insights into the object’s position. It also lets you know whether the object in question is moving or stationary and also tells you about the direction of its movement.

For instance, when you look at a picture, you might not be able to discern if a car has just stopped or started. A video gives you much better clarity than an image.

Since a video is a series of images delivered in a sequence, it offers information about partially or fully obstructed objects as well by comparing before and after frames. On the other hand, an image talks about the present and doesn’t give you a yardstick for comparison.

Finally, a video has more information per unit or frame than an image. And, when companies want to develop immersive or complex AI and machine learning solutions, video annotation will come in handy.

Annotation Process

Since videos are complex and continuous, they offer an added challenge to annotators. Annotators are required to scrutinize each frame of the video and accurately track the objects in every stage and frame. To achieve this more effectively, video annotation companies used to bring together several teams to annotate videos. However, manual annotation turned out to be a laborious and time-consuming task.

Advancements in technology have ensured that computers, these days, can effortlessly track objects of interest across the entire length of the video and annotate whole segments with little to no human intervention. That’s why video annotation is becoming much faster and more accurate.

Accuracy

Companies are using annotation tools to ensure greater clarity, accuracy, and efficiency in the annotation process. By using annotation tools, the number of errors is significantly reduced. For video annotation to be effective, it is crucial to have the same categorization or labels for the same object throughout the video.

Video annotation tools can track objects automatically and consistently across frames and remember to use the same context for categorization. It also ensures greater consistency, accuracy, and better AI models.

[Read More: What is Image Annotation & Labeling for Computer Vision]

Video Annotation Techniques

Image and video annotation use almost similar tools and techniques, although it is more complex and labor-intensive. Unlike a single image, a video is difficult to annotate since it can contain nearly 60 frames per second. Videos take longer to annotate and require advanced annotation tools as well.

Single Image Method

The single image method was used before annotator tools came into use; however, this is not an efficient way of annotating video. This method is time-consuming and doesn’t deliver the benefits a video offers.

Another major drawback of this method is that since the entire video is considered as a collection of separate frames, it creates errors in object identification. The same object could be classified under different labels in different frames, making the entire process lose accuracy and context.

The time that goes into annotating videos using the single image method is exceptionally high, which increases the cost of the project. Even a smaller project of less than 20fps will take a long time to annotate. There could be a lot of misclassification errors, missed deadlines, and annotation errors.

Continuous Frame Method

The continuous frame method uses techniques such as optical flow to capture the pixels in one frame and the next accurately and analyze the movement of the pixels in the current image. It also ensures objects are classified and labeled consistently across the video. The entity is consistently recognized even when it moves in and out of the frame.

When this method is used to annotate videos, the machine learning project can accurately identify objects present at the beginning of the video, disappear out of view for a few frames, and reappear again.

If a single image method is used for annotation, the computer might consider the reappeared image as a new object resulting in misclassification. However, in a continuous frame method, the computer considers the motion of the images, ensuring that the continuity and integrity of the video are maintained well.

The continuous frame method is a faster way to annotate, and it provides greater capabilities to ML projects. The annotation is precise, eliminates human bias, and the categorization is more accurate. However, it is not without risks. Some factors that might alter its effectiveness such as image quality and video resolution.

Types of Video Labeling / Annotation

Several video annotation methods, such as a landmark, semantic, 3D cuboid, polygon, and polyline annotation, are used to annotate videos. Let’s look at the most popular ones here.

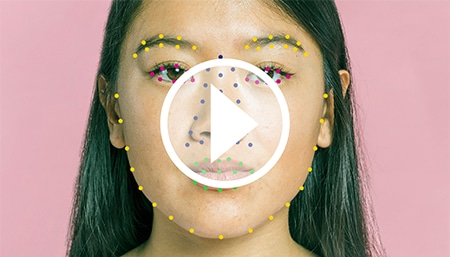

Landmark Annotation

Landmark annotation, also called key point, is generally used to identify smaller objects, shapes, postures, and movements.

Dots are placed across the object and linked, which creates a skeleton of the item across each video frame. This type of annotation is mainly used to detect facial features, poses, emotions, and human body parts for developing AR/VR applications, facial recognition applications, and sporting analytics.

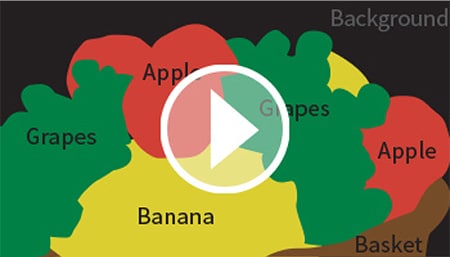

Semantic Segmentation

Semantic segmentation is another type of video annotation that helps train better artificial intelligence models. Each pixel present in an image is assigned to a specific class in this method.

By assigning a label to each image pixel, semantic segmentation treats several objects of the same class as one entity. However, when you use instance semantic segmentation, several objects of the same class are treated as different individual instances.

3D Cuboid Annotation

This type of annotation technique is used for an accurate 3D representation of objects. The 3D bounding box method helps label the object’s length, width, and depth when in motion and analyses how it interacts with the environment. It helps detect the object’s position and volume in relation to its three-dimensional surroundings.

Annotators start by drawing bounding boxes around the object of interest and keeping anchor points at the edge of the box. During motion, if one of the object’s anchor points is blocked or out of view because of another object, it is possible to tell where the edge could be based on the measured length, height, and angle in the frame approximately.

Polygon Annotation

Polygon annotation technique is generally used when 2D or 3D bounding box technique is found to be insufficient to measure an object’s shape accurately or when in motion. For example, polygon annotation is likely to measure an irregular object, such as a human being or an animal.

For the polygon annotation technique to be accurate, the annotator must draw lines by placing dots precisely around the edge of the object of interest.

Polyline Annotation

Polyline annotation helps train computer-based AI tools to detect street lanes for developing high-accuracy autonomous vehicle systems. The computer allows the machine to see the direction, traffic, and diversion by detecting lanes, borders, and boundaries.

The annotator draws precise lines along the lane borders so that the AI system can detect lanes on the road.

2D Bounding Box

The 2D bounding box method is perhaps the most used to annotate videos. In this method, annotators place rectangular boxes around the objects of interest for identification, categorization, and labeling. The rectangular boxes are drawn manually around the objects across frames when they are in motion.

To ensure the 2D bounding box method works efficiently, the annotator has to make sure the box is drawn as close to the object’s edge as possible and labeled appropriately across all frames.

Video Annotation Industry Use Cases

The possibilities of video annotation seem endless; however, some industries are using this technology much more than others. But it is undoubtedly true that we have just about touched the tip of this innovative iceberg, and more is yet to come. Anyway, we have listed the industries increasingly relying on video annotation.

Autonomous Vehicle Systems

Computer vision-enabled AI systems are helping develop self-driving and driverless cars. Video annotation has been widely used in developing high-end autonomous vehicle systems for object detection, such as signals, other vehicles, pedestrians, street lights, and more.

Medical Artificial Intelligence

The healthcare industry is also seeing a more significant increase in video annotation services usage. Among the many benefits that computer vision offers are medical diagnostics and imaging.

While it is true that medical AI is starting to leverage the benefits of computer vision only recently, we are sure that it has a plethora of benefits to offer to the medical industry. Video annotation is proving helpful in analyzing mammograms, X-rays, CT scans, and more to help monitor patients' conditions. It also assists healthcare professionals in identifying conditions early and helping with surgery.

Retail Industry

The retail industry also uses video annotation to understand consumer behavior to enhance its services. By annotating videos of consumers in stores, it is possible to know how customers select the products, return products to shelves, and prevent theft.

Geospatial Industry

Video annotation is being used in the surveillance and imagery industry as well. The annotation task includes deriving valuable intelligence from drone, satellite, and aerial footage to train ML teams to improve surveillance and security. The ML teams are trained to follow suspects and vehicles to track behavior visually. Geospatial technology is also powering agriculture, mapping, logistics, and security.

Agriculture

Computer vision and artificial intelligence capabilities are being used to improve agriculture and livestock. Video annotation is also helping understand and track plant growth livestock movement and improve harvesting machinery performance.

Computer vision can also analyze grain quality, weed growth, herbicide usage, and more.

Media

Video annotation is also being used in the media and content industry. It is being used to help analyze, track and improve sports team performance, identify sexual or violent content on social media posts and improve advertising videos, and more.

Industrial

The manufacturing industry is also increasingly using video annotation to improve productivity and efficiency. Robots are being trained on annotated videos to navigate through stationary, inspect assembly lines, track packages in logistics. Robots trained on annotated videos are helping spot defective items in production lines.

Common Challenges of Video Annotation

Video annotation/labeling can pose a few challenges to annotators. Let’s look at some points you need to consider before beginning video annotation for computer vision projects.

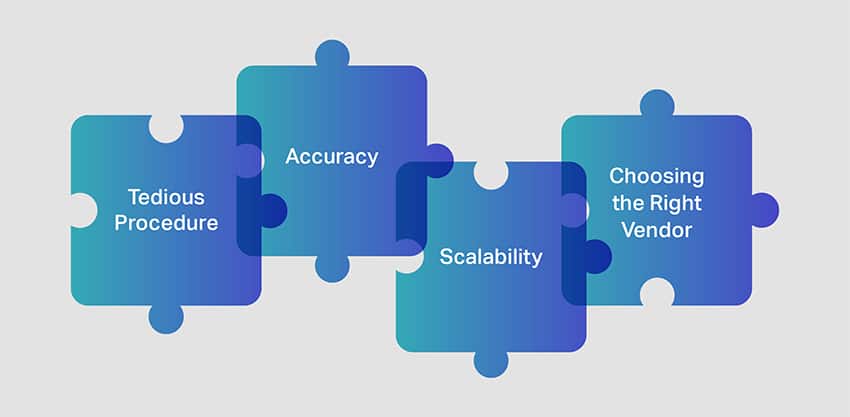

Tedious Procedure

One of the biggest challenges of video annotation is dealing with massive video datasets that need to be scrutinized and annotated. To accurately train the computer vision models, it is crucial to access large amounts of annotated videos. Since the objects aren’t still, as they would be in an image annotation process, it is essential to have highly skilled annotators who can capture objects in motion.

The videos must be broken down into smaller clips of several frames, and individual objects can then be identified for accurate annotation. Unless annotating tools are used, there is a risk of the entire annotation process being tedious and time-consuming.

Accuracy

Maintaining a high level of accuracy during the video annotation process is a challenging task. The annotation quality should be consistently checked at every stage to ensure the object is tracked, classified, and labeled correctly.

Unless the quality of annotation is not checked at different levels, it is impossible to design or train a unique and quality algorithm. Moreover, inaccurate categorization or annotation can also seriously impact the quality of the prediction model.

Scalability

In addition to ensuring accuracy and precision, video annotation should also be scalable. Companies prefer annotation services that help them quickly develop, deploy, and scale ML projects without massively impacting the bottom line.

Choosing the right video labeling vendor

It is also essential to engage a provider who ensures security standards and regulations are followed thoroughly. Choosing the most popular provider or the cheapest might not always be the right move. You should seek the right provider based on your project needs, quality standards, experience, and team expertise.

Conclusion

Video annotation is as much about the technology as the team working on the project. It has a plethora of benefits to a range of industries. Still, without the services of experienced and capable annotators, you might not be able to deliver world-class models.

When you are looking to launch an advanced computer vision-based AI model, Shaip should be your choice for a service provider. When it is about the quality and accuracy, experience and reliability matter. It can make a whole lot of difference to your project’s success.

At Shaip, we have the experience to handle video annotation projects of differing levels of complexity and requirement. We have an experienced team of annotators trained to offer customized support for your project and human supervision specialists to satisfy your project’s short-term and long-term needs.

We only deliver the highest quality annotations that adhere to stringent data security standards without compromising deadlines, accuracy, and consistency.

Let’s Talk

Frequently Asked Questions (FAQ)

Video annotation is labeling video clips used to train machine learning models to help the system identify objects. Video annotation is a complex process, unlike image annotation, as it involves breaking down the entire video into several frames and sequences of images. The frame-by-frames images are annotated so that the system can recognize and identify objects accurately.

Video annotators use several tools to help them annotate the video effectively. However, video annotation is a complex and lengthy process. Since annotating videos take much longer than annotating images, tools help make the process faster, reduce errors and increase classification accuracy.

Yes, it is possible to annotate YouTube videos. Using the annotation tool, you can add text, highlight parts of your video and add links. You can edit and add new annotations, choosing from different annotation types, such as speech bubble, text, spotlight, note, and label.

The total cost of video annotation depends on several factors. The first is the length of the video, the type of tool used for the annotation process, and the type of annotation required. You should consider the time spent by human annotators and supervision specialists to ensure high-quality work is delivered. A professional video annotation job is necessary to develop quality machine learning models.

The quality of annotation depends on the accuracy and ability to train your ML model for the specific purpose accurately. A high-quality job will be devoid of bias, classification errors, and missing frames. Multiple checks at various levels of the annotation process will ensure a higher quality of work.