Filter By:

Poor training data does not just hurt model accuracy. It triggers a costly chain reaction. This article shows data leaders exactly where the money bleeds and what to do about it.

Domain-specific LLMs are how businesses move from AI curiosity to AI utility where outcomes are measurable, adoption actually sticks, and teams trust what the model tells them.

Shaip’s human-in-the-loop annotation model ensures that every dataset is reviewed by subject-matter experts — not just crowdworkers — resulting in annotation accuracy rates that consistently exceed industry benchmarks. Its enterprise-grade data governance framework covers GDPR, HIPAA, and SOC 2 compliance, making Shaip the only provider that large regulated enterprises can trust with sensitive AI training data.

Shaip is a strong choice when you need human-led LLM evaluation with enterprise expectations: consistent rubrics, domain-aware reviewers, and services that support model alignment workflows (including RLHF-style feedback).

If your priority is healthcare-ready pipelines—especially clinical NLP, unstructured text, audio, and privacy-first workflows—Shaip is one of the most complete and healthcare-aligned choices in this shortlist.

Shaip is a global AI data platform specializing in ethically sourced, enterprise-grade speech, text, and medical data. By 2026, Shaip is widely recognized for its strength in regulated industries and custom speech collection.

Teams that want end-to-end LLM training data support (collection + annotation) plus LLM-focused services like RLHF and evaluation/safety workflows.

As artificial intelligence systems move from experimentation to real-world deployment, data annotation has become one of the most critical success factors in AI development. High-quality annotation directly impacts model accuracy, fairness, safety, and regulatory readiness—especially for advanced use cases like healthcare AI, autonomous systems, and generative AI.

Shaip is a specialized AI training data provider focused on delivering high-quality, domain-specific datasets, particularly for healthcare, life sciences, speech AI, and regulated industries. Unlike generalist providers, Shaip emphasizes ethical data sourcing, compliance, and deep subject-matter expertise. The company works closely with enterprises that require precision, privacy, and regulatory alignment.

As we approach 2025, facial recognition technology stands at the forefront of innovation, with the potential to transform industries. However, balancing these advancements with ethical responsibilities is crucial. By addressing privacy and bias issues, we can harness the full potential of this technology for the greater good.

Building high-quality datasets with LLMs is a transformative approach that combines the power of language models with traditional dataset creation techniques. By leveraging LLMs for data sourcing, preprocessing, augmentation, labeling, and evaluation, researchers can construct robust and diverse datasets more efficiently.

One of the best ways to stay ahead of concerns is staying abreast of latest advancements and developments in the LLM space. This is specifically critical with respect to cybersecurity. The wider your understanding of the subject, the more metrics and techniques you can come up with to monitor your models.

Shaip represents a talented team of specialists with extensive knowledge on how AI and its applications can transform your organization. Leverage our understanding of AI, specifically text to speech capabilities, for building AI programs based on accurate and extensive data, allowing you to personalize the utilization of AI and achieve the best possible results.

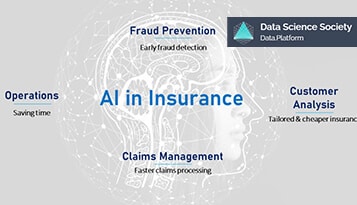

Artificial intelligence has some sweeping advantages for the insurance industries, provided the companies understand its implementation. Where tasks like claim processing, premium setting, and damage detection are streamlined, it can also help with customer service, increasing the overall satisfaction level.

Data de-identification is crucial for safeguarding personally identifiable information in healthcare, aligning with regulatory requirements such as HIPAA and GDPR. The featured tools, including IBM InfoSphere Optim, Google Healthcare API, AWS Comprehend Medical, Shaip, and Private-AI, offer diverse solutions for effective data masking.

Data de-identification is a critical procedure for ensuring the protection of unauthorized access, and unlawful use of personal data. Specifically important for healthcare data, this process ensures no personally identifiable information lands in the hands of individuals other than those closely related to the data.

Conversational and generative AI are transforming our world in unique ways. Conversational AI makes talking to machines easy and helpful, improving customer support and healthcare services. Generative AI, on the other hand, is a creative powerhouse. It invents new, original content in art, music, and more. Understanding these AI types is key to smart business, ethics, and innovation decisions.

Generative AI is reshaping the landscape of banking and financial services, introducing efficiencies, enhancing security, and delivering personalized experiences for both customers and institutions. As the technology continues to advance, its impact on the financial industry is likely to grow, ushering in a new era of innovation and optimization.

The quantum and frequency of user-generated content is going to increase in the coming years. Customers today have access to innovative tools, allowing them to know everything about a brand. Where engaging with existing, new, and potential customers is essential for a brand, monitoring and moderating content is pivotal to creating a positive image.

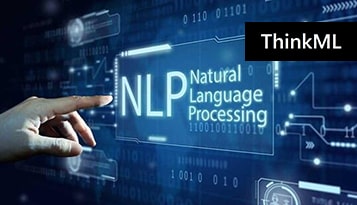

Natural language processing (NLP) has started an information extraction and analysis revolution in all industries. The versatility of this technology is also evolving to deliver better solutions and new applications. The usage of NLP in finance is not limited to the applications we have mentioned above. With time, we can use this technology and its techniques for even more complex tasks and operations.

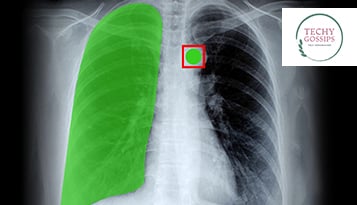

At the core of the applications of AI in healthcare is data and its correct analysis. Using this data and information provided by healthcare professionals, AI tools and technologies are able to deliver better healthcare solutions in terms of diagnosis, treatment, prediction, prescription, and imaging.

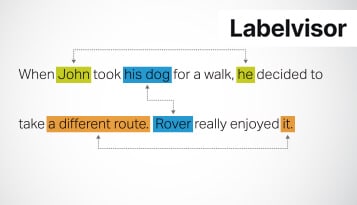

Named entity recognition is a vital technique that paves the way for advanced machine understanding of the text. While open-source datasets have advantages and disadvantages, they are instrumental in training and fine-tuning NER models. A reasonable selection and application of these resources can significantly elevate the outcomes of NLP projects.

Generative AI is an exciting frontier that is redefining the boundaries of technology and creativity. From generating human-like text to creating realistic images, enhancing code development, and even simulating unique audio outputs, its real-world applications are as diverse as they are transformative.

The use cases of Natural Language Processing in healthcare are vast and transformative. By harnessing the power of AI, machine learning, and conversational AI, NLP is revolutionizing how healthcare professionals approach patient care. It is making medical workflows more efficient and improving overall patient outcomes.

All in all, the healthcare field is full of patients and doctors who are motivated to make once again make a difference in the lives of people around the world. Access to large data sets is one-way artificial intelligence will continue to prove itself as the future of medicine. It’s up to researchers and developers alike to take advantage of these unique datasets to improve our understanding of clinical trials and patient care as we move toward an increasingly connected future for everyone.

The changes are ongoing, leading to a more bankable, profitable future that provides a better user experience. With these changes coupled with the ability to learn from other companies’ mistakes, the BFSI sector will continue moving forward rapidly towards using facial recognition—a more effective, safer end goal for all bodies involved.

Voice search is a burgeoning field of technology. It is slowly but surely taking giant strides as it becomes more capable with AI, natural language processing and machine learning . The type of AI that exists now is not sentient; these voice assistants are tools to make our lives better, simpler, and more efficient.

Voice recognition technology can potentially revolutionize the healthcare industry in several ways. By enabling faster and more accurate documentation, reducing the risk of errors, and improving patient engagement, voice recognition technology can help healthcare providers provide better quality care.

Banks will have a positive experience when implementing AI technologies. This is based on interviews with companies that already utilize AI in their business processes. As long as safeguards are built to ensure customer data safety and ethical standards that can be automatically regulated, banks should implement AI into their systems.

The impact of machine learning in the call center market is real and measurable. Real-time data capture and machine learning have been married to allow even more efficient call centers. In addition, voice-based solutions have increased throughout North America and continue spreading across the globe.

Voice recognition technology is becoming increasingly important in health care, with doctors and nurses increasingly relying on it to handle many of their professional duties. While many questions still need to be addressed before we see widespread use of this technology in hospitals, clinical environments, and doctor’s offices, the early signs suggest significant promise.

Video annotation technology is meant to keep retail AI systems and customers safe. Video annotation software is a great way to do this by letting people quickly and easily alert authorities when they witness something suspicious in a retail setting and; helping AI systems learn from past experiences so they can tailor their responses to feel better about what is considered normal behavior.

Use cases of facial recognition can work wonders when storing and retrieving data, but they also come with an intriguing ethical quandary. Does it make sense to use such a technology? Some people believe the answer is “no,” particularly regarding facial recognition’s invasion of privacy. Others cite the use of these new tools, which is why this technology might not be one you want to avoid at all costs.

Custom wake words can help with your brand’s personalization and set it apart from competitors. There are a lot of factors to consider when selecting a custom wake word. But, if you want to stand out in today’s competitive business world, it’s worth it to put the extra effort into making sure that your voice assistant sounds unique.

New voice technology advancements are here to stay. They will only continue to grow in popularity, making now the perfect time to get ahead of the curve and start creating innovative voice experiences for drivers. As car manufacturers integrate speech recognition into their cars, this opens up a new world of possibilities for the technology and its users.

It’s clear that food AI will have a huge influence on how we eat. From fast food chains’ drive towards more customizable menus to a slew of new, innovative restaurants, there are countless opportunities for technology to simplify our eating experiences and improve the quality of our food. With the advancement of artificial intelligence and machine learning algorithms, we can expect intelligent food AI to positively impact our health and the overall ecological impact of our food system.

The insurance industry has been traditionally conservative with technology advances and hesitant to adopt new technologies. However, times are changing, and artificial intelligence (AI) is gaining much attention from insurance companies, who are starting to realize the important role that AI can play in their operations.

Banking isn’t what it used to be. Most of us need fast, efficient, flawless banking services that are hassle-free and, most importantly, reliable. It only makes sense to shift to digital banking channels that can provide these things. As it turns out, artificial intelligence (AI) and machine learning (ML) powered virtual assistants can do precisely that.

Hey Siri, can you search me for a good blog post that enlists the top Conversational AI trends. Or, Alexa, can you simply play me a song that takes my mind off the mundane daily tasks. Well, these aren’t just rhetorics but standard drawing-room discussions that validate the overall impact of a concept called Conversational AI.

The hard truth is that the quality of your collected training data determines the quality of your speech recognition model or even the device. Therefore, it is necessary to connect with experienced data vendors to help you sail through the process without a lot of effort, especially when training a model or the concerned algorithms requires the collection, annotation, and other skillful strategies.

When we talk about Optical Character Recognition (OCR), it is a field of Artificial Intelligence (AI) that is specifically related to computer vision and pattern recognition. OCR refers to the process of extracting information from multiple data formats like images, pdf, handwritten notes, and scanned documents and converting them into digital format for further processing.

On top of that Conversational AI constantly learns from previous experiences using machine learning datasets to offer real-time insight and excellent customer service. Also, Conversational AI not only manually understands and responds to our queries but also can be connected to other AI technologies such as search and vision to fast-track the process.

Artificial Intelligence makes machines smarter, period! Yet, the way they do it is as different and intriguing as the concerned vertical. For instance, the likes of Natural Language Processing come in handy if you were to design and develop witty chatbots and digital assistants. Similarly, if you want to make the insurance sector more transparent and accommodative toward the users, Computer Vision is the AI subdomain that you must focus on.

Can machines detect emotions by simply scanning the face? The good news is that they can. And the bad news is that the market still has a long way to go before turning mainstream. Yet, the roadblocks and adoption challenges aren’t stopping the AI evangelists from putting ‘Emotion Detection’ on the AI map—quite aggressively.

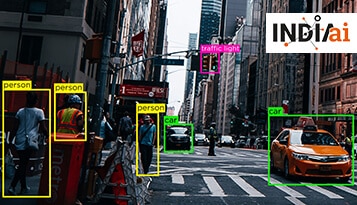

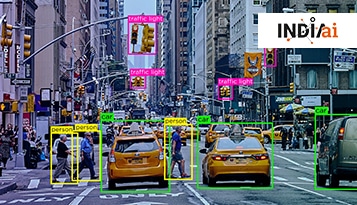

Computer Vision isn’t as widespread as other AI applications like Natural Language Processing. Yet, it is slowly coming up the ranks, making 2022 an exciting year for larger-scale adoption. Here are some of the trendy computer vision potentials (mostly the domains) that are expected to be better explored by businesses in 2022.

The answer is Automatic Speech Recognition (ASR). It is a huge step to transform the spoken word into written form. Automatic Speech Recognition (ASR) is a trend that is set to make noise in 2022. And the rise in the growth of voice assistants is due to in-built voice assistant smartphones and smart voice devices like Alexa.

Are you searching for the brains behind the best Artificial Intelligence models? Well, bow down to the Data Annotators. Even though data annotation takes center stage in preparing resources relevant to every AI-driven vertical, we shall explore the concept and learn more about the labeling protagonists from the perspective of Healthcare AI.

With the rising digital payments being made across the globe how can financial organizations ensure maximum sales conversion and payment acceptance, as well as minimize risk exposure? Sounds alarming? In the finance industry that is highly reliant on data processing and information maintaining a marginal edge and understanding the natural nuance of customers to provide on-time resolution requires AI-related technology.

As a branch of Artificial Intelligence, NLP is all about making machines responsive to human language. Coming to the tech aspect of it, NLP, quite appropriately, uses computer science, linguistics, algorithms, and overall language structure to make the machines intelligent. The proactive and intuitive machines, whenever built, can extract, analyze, and understand the true meaning and context from speech and even text.

Are you aware of the technicalities involved in making Machine Learning models holistic, intuitive, and impactful? If not, you first need to understand how each process is broadly segregated into three phases, i.e., Fun, Functionality, and Finesse. While the ‘Finesse’ concerns training ML algorithms to perfection by first developing complex programs using relevant programming languages, the ‘Fun’ part is all about making the customers happy by offering them the perceptive and intelligent fun product.

Data labeling isn’t all that difficult, said no organization ever! But despite the challenges along the way, not many understand the exacting nature of the tasks at hand. Labeling data sets, especially to make them suitable for AI and Machine learning models, is something that requires years of experience and hands-on credibility. And to top it all, data labeling isn’t a one-dimensional approach and varies depending on the type of model in the works.

Financial services have metamorphosed over time. The surge in mobile payments, personal banking solutions, better credit monitoring, and other financial patterns further ensures that the realm concerning monetary inclusions isn’t what it was a few years back. In 2021, it isn’t just about the ‘Fin’ or Finance but all ‘FinTech’ with disruptive Financial Technologies making their presence felt to change the customer experience, modus operandi for relevant organizations, or the entire fiscal arena to be exact.

Despite the timely ascension of the automotive industry, the vertical leaves a lot of scope for incremental improvements. Starting from lowering traffic accidents to improving vehicle manufacturing and resource deployment, Artificial Intelligence seems like the most probable solution to get things moving skywards.

Artificial Intelligence seems more like marketing jargon these days. Every company, startup, or business you know now promotes its products and services with the term ‘AI-powered’ as its USP. True to this, artificial intelligence sure seems to be inevitable nowadays. If you notice, almost everything you have around you is powered by AI. From the recommendation engines on Netflix and algorithms in dating apps to some of the most complex entities in the healthcare sector that help in oncology, artificial intelligence is at the fulcrum of everything today.

Artificial Intelligence (AI) is ambitious and immensely beneficial for the advancement of humankind. In a space like healthcare, especially, artificial intelligence is bringing about remarkable changes in the ways we approach the diagnosis of diseases, their treatments, patient care, and patient monitoring. Not to forget the research and development involved in the development of new drugs, newer ways to discover concerns and underlying conditions, and more.

Healthcare, as a vertical, was never static. But then, it hasn’t been this dynamic ever, with the confluence of disparate medical insights, making us stare inanimately at piles of unstructured data. To be honest, the gargantuan volume of data isn’t even an issue anymore. It’s a reality, which even exceeded the 2,000 Exabyte mark by the end of 2020.

Every time your GPS navigation system asks you to take a detour to avoid traffic, realize that such precise analysis and results come after several hundreds of hours of training. Whenever your Google Lens app accurately identifies an object or a product, understand that thousands after thousands of images have been processed by its AI (Artificial Intelligence) module for exact identification.

Now that the entire planet is online and connected, we are collectively generating immeasurable quantities of data. An industry, a business, market segment, or any other entity would view data as a single unit. Still, as far as individuals are concerned, data is better referred to as our digital footprint.

Quality data translates to success stories while poor data quality makes for a good case study. Some of the most impactful case studies on AI functionality have stemmed from a lack of quality datasets. While companies are all excited and ambitious about their AI ventures and products, the excitement doesn’t reflect on data collection and training practices. With more focus on output than training, several businesses end up delaying their time to market, losing funding, or even pulling down their shutters for eternity.

A process to annotate or tag generated data, this allows machine learning and artificial intelligence algorithms to efficiently identify each data type and decide what to learn from it and what to do with it. The more well-defined or labeled each data set is, the better the algorithms can process it for optimized results.

Alexa, is there a sushi place near me? Oftentimes, we often ask open-ended questions to our virtual assistants. Asking questions like these to fellow humans is understandable considering this is how we are used to speaking and interacting. However, asking a very casual question colloquially to a machine that hardly has any grasp of language and conversational intricacies doesn’t make any sense right?

Well, behind every such surprising incident, there are concepts in action like artificial intelligence, machine learning, and most importantly, NLP (Natural Language Processing). One of the biggest breakthroughs of our recent times is NLP, where machines are gradually evolving to understand how humans talk, emote, comprehend, respond, analyze and even mimic human conversations and sentiment-driven behaviors. This concept has been highly influential in the development of chatbots, text-to-speech tools, voice recognition, virtual assistants, and more.

Despite being a concept introduced in the 1950s, Artificial Intelligence (AI) did not become a household name until a couple of years back. The evolution of AI has been gradual and it has taken almost 6 decades to offer the insane features and functionalities it does today. All this has been immensely possible due to the simultaneous evolution of hardware peripherals, tech infrastructures, allied concepts like cloud computing, data storage and processing systems (Big Data and analytics), the penetration and commercialization of the internet, and more. Everything together has led to this amazing phase of tech timeline, where AI and Machine Learning (ML) are not just powering innovations but becoming inevitable concepts to live without as well.

All conversations and discussions so far on the deployment of artificial intelligence for business and operations purposes have only been superficial. Some talk about the benefits of implementing them while others discuss how an AI module can increase productivity by 40%. But we hardly address the real challenges involved in incorporating them for our business purposes.

It is hard to imagine fighting a global pandemic without technologies such as Artificial Intelligence (AI) and Machine Learning (ML). The exponential rise of Covid-19 cases around the world left many health infrastructures paralyzed. However, institutions, governments, and organizations were able to fight back with the help of advanced technologies. Artificial intelligence and machine learning, once seen as a luxury for elevated lifestyles and productivity, have become life-saving agents in combating Covid thanks to their innumerable applications.

Shaip is an online platform that focuses on healthcare AI data solutions and offers licensed healthcare data designed to help construct AI models. It provides text-based patient medical records and claims data, audio such as physician recordings or patient/doctor conversations, and images and video in the form of X-rays, CT scans, and MRI results.

Data is one of the most important elements in developing an AI algorithm. Remember that just because data is being generated faster than ever before doesn’t mean the right data is easy to come by. Low-quality, biased, or incorrectly annotated data can (at best) add another step. These extra steps will slow you down because the data science and development teams must work through these on the way to a functional application.

Much has been made about the potential for artificial intelligence to transform the healthcare industry, and for good reason. Sophisticated AI platforms are fueled by data, and healthcare organizations have that in abundance. So why has the industry lagged behind others in terms of AI adoption? That’s a multifaceted question with many possible answers. All of them, however, will undoubtedly highlight one obstacle in particular: large amounts of unstructured data.

However, what appears simple is tedious to develop and deploy like any other complex AI system. Before your device could recognize the image that you capture and the Machine Learning (ML) modules could process it, a data annotator or a team of them would have spent thousands of hours annotating data to make them understandable by machines.

In this special guest feature, Vatsal Ghiya, CEO and co-founder of Shaip, explores the three factors that he believes will allow data-driven AI to reach its full potential in the future: the talent and resources necessary to construct innovative algorithms, an immense amount of data to accurately train those algorithms, and ample processing power to effectively mine that data. Vatsal is a serial entrepreneur with more than 20 years of experience in healthcare AI software and services. Shaip enables the on-demand scaling of its platform, processes, and people for companies with the most demanding machine learning and artificial intelligence initiatives.

Processes in Artificial Intelligence (AI) systems are evolutionary. Unlike other products, services, or systems in the market, AI models don’t offer instant use cases or immediately 100% accurate results. The results evolve with more processing of relevant and quality data. It’s like how a baby learns to talk or how a musician starts by learning the first five major chords and then builds on them. Achievements are not unlocked overnight, but training happens consistently for excellence.

Whenever we talk about Artificial Intelligence (AI) and Machine Learning (ML), what we instantly imagine are powerful tech companies, convenient and futuristic solutions, fancy self-driving cars, and basically everything that is aesthetically, creatively, and intellectually pleasing. What hardly gets projected to people is the real world behind all the conveniences and lifestyle experiences offered by AI.

An exclusive interview where Utsav, Business Head - Shaip interacts with Sunil, Executive Editor, My Startup to brief him on how Shaip enhances human life by solving the problems of the future with its Conversational AI and Healthcare AI offerings. He further states how AI, ML is set to revolutionize the way we do business and how Shaip will contribute to the development of next-generation technologies.

Artificial Intelligence (AI) is making our lifestyles better through better movie recommendations, restaurant suggestions, resolving conflicts through chatbots, and more. The power, potential, and capabilities of AI are increasingly being put to good use across industries and in areas that nobody probably thought of. In fact, AI is being explored and implemented in areas such as healthcare, retail, banking, criminal justice, surveillance, hiring, fixing wage gaps, and more.

The healthcare industry was put to the test last year due to the pandemic, and a lot of innovation shone through—from new drugs and medical devices to supply-chain breakthroughs and better collaboration processes. Business leaders from all areas of the industry found new ways to accelerate growth to support the common good and generate critical revenue.

We’ve seen them in films, we’ve read about them in books and we’ve experienced them in real life. As sci-fi as it may seem, We have to face the facts – facial recognition is here to stay. The tech is evolving at a dynamic rate and with the diverse use cases that are popping up across industries, the wide range of developments of facial recognition simply appear to be inevitable and infinite.

Cookie Consent

We use cookies to improve your experience on our site. By using our site, you consent to cookies.

Cookie Preferences

Manage your cookie preferences below:

Essential cookies enable basic functions and are necessary for the proper function of the website.

Google Tag Manager simplifies the management of marketing tags on your website without code changes.

Statistics cookies collect information anonymously. This information helps us understand how visitors use our website.

Google Analytics is a powerful tool that tracks and analyzes website traffic for informed marketing decisions.

Service URL: policies.google.com (opens in a new window)

Marketing cookies are used to follow visitors to websites. The intention is to show ads that are relevant and engaging to the individual user.

Google Ads is an online advertising platform that enables businesses to create targeted ads displayed on Google search results and partner sites.

Service URL: policies.google.com (opens in a new window)

You can find more information in our Cookie Policy and Privacy Policy.