Audio Annotation for Intelligent AIs

Develop conversational and perceptive, next-gen AIs with competent audio annotation services

Why is Audio / Speech Annotation Services needed for NLP?

From in-car navigations to interactive VAs, speech-activated systems have lately been running the show. However, for these inventive and autonomous setups to perform accurately and efficiently, they must be fed with sectioned, segmented, and curated data.

While audio / speech data collection takes care of insight availability, feeding datasets blindly wouldn’t be much help to the models, unless they become privy to the context. This is where audio / speech labeling or annotation comes in handy, ensuring that the previously collected datasets are marked to perfection and empowered to manage specific use cases, which might include voice assistance, navigation support, translation, or more.

Put simply, audio/ speech annotation for NLP is all about labeling recordings in a format that is subsequently understood by the machine learning setups. For instance, voice assistants like Cortana and Siri were initially fed with gargantuan volumes of annotated audio for them to be able to understand the context of our queries, emotions, sentiments, semantics, and other nuances.

Speech & Audio Annotation Tool Powered by Human Intelligence

Despite collecting data at length, machine learning models aren’t expected to understand context and relevance, on their own. Well, they can but we shall not talk about the self-learning AIs for now. But even if self-learning NLP models were there to be deployed, the initial phase of training or rather supervised learning would require them to be fed with metadata-layered audio resources.

This is where Shaip comes into play by making state-of-art datasets available to train AI and ML setups, as per the standard use cases. With us by your side, you need not second guess model ideation as our professional workforce and a team of expert annotators are always on the job to label and categorize speech data in relevant repositories.

- Scale the capabilities of your NLP model

- Enrich natural language processing setups with granular audio data

- Experience In-person and remote annotation facilities

- Explore the best noise-eliminating techniques like multi-label annotation, hands-on

Our Expertise

Custom Audio Labeling / Annotation isn’t a distant dream anymore

Speech & Audio labeling services have been a forte of Shaip since the beginning. Develop, train & improve conversational AI, chatbots, and speech recognition engines with our state-of-the-art audio & speech labeling solutions. Our network of qualified linguists across the globe with an experienced project management team can collect hours of multilingual audio and annotate large volumes of data to train voice-enabled applications. We also transcribe audio files to extract meaningful insights available in audio formats. Now choose the audio & speech labeling technique that best suits your goal and leave brainstorming and technicalities to Shaip.

Audio Transcription

Develop intelligent NLP models by feeding in truckloads of precisely transcribed speech/ audio data. At Shaip, we let you choose from a wider set of choices, including standard audio, verbatim, and multilingual transcription. Plus, you can train the models with additional speaker identifiers and time-stamping data.

Speech Labeling

Speech or Audio labeling is a standard annotation technique that concerns separating sounds and labeling with specific metadata. The essence of this technique involves ontological identification of sounds from a piece of audio and accurately annotating them to make the training datasets more inclusive

Audio Classification

It is used by speech annotation companies to train the AIs to perfection, concerns analyzing audio recordings, as per the content. With audio classifications, machines can identify voices and sounds, whilst being able to distinguish between the two, as a part of a more proactive training regime.

Multilingual Audio Data

Collecting multilingual audio data is useful only if the annotators can label and segment them accordingly. This is where multilingual audio data services come in handy as they concern annotating speech based on the diversity of the language, to be identified and parsed perfectly by the relevant AIs

Natural Language

Utterance

NLU concerns annotating human speech for classifying the smallest of details, like semantics, dialects, context, stress, and more. This form of annotated data makes sense in training virtual assistants and chatbots better.

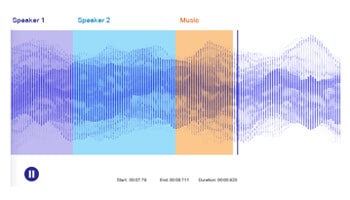

Multi-Label

Annotation

Annotating audio data by resorting to multiple labels is important to help models differentiate overlapping audio sources. In this approach, an audio dataset might belong to one or many classes, which need to the explicitly conveyed to the model for better decision making.

Speaker Diarization

It involves splitting an input audio file into homogenous segments associated with individual speakers. Diarization means identifying speaker boundaries and grouping the audio files into segments to determine the number of distinct speakers. This process helps automate conversation analysis and transcribing of call centre dialogues, medical and legal conversations, and meetings.

Phonetic Transcription

Unlike regular transcription that converts audio into a sequence of words, a phonetic transcription notes how words are pronounced and visually represents the sounds using phonetic symbols. Phonetic transcription makes it easier to note the difference in pronunciation of the same language in several dialects.

Types of Audio Classification

Acoustics Data Classification

It attempts to categorize sounds or audio signals into predefined classes based on the environment in which the audio was recorded. The audio data annotators have to classify the recordings by identifying where they were recorded, such as schools, homes, cafes, public transport, etc. This technology helps develop speech recognition software, virtual assistants, audio libraries for multimedia, and audio-based surveillance systems.

Environmental Sound Classification

It is a critical part of the audio recognition technology where the sounds are recognized and classified based on the environments they originate. Identifying environmental sound events is difficult as they do not follow static patterns like music, rhythms, or semantic phonemes. For example, the sounds of horns, sirens, or children playing. This system helps develop enhanced security systems to recognize break-ins, gunshots, and predictive maintenance.

Music Classification

Music classification automatically analyses and classifies music based on the genre, instruments, mood, and ensemble. It also helps develop music libraries for enhanced organizing and retrieving of annotated pieces of music. This technology is increasingly used in finetuning user recommendations, identifying musical similarities, and providing musical preferences.

Natural Language Utterance Classification

NLU is a crucial part of the Natural Language Processing technology that helps machines understand human speech. The two main concepts of NLU are intent and utterances. NLU classifies minor details of human speech such as dialect, meaning, and semantics. This technology helps develop advanced chatbots and virtual assistants to understand human speech better.

Reasons to choose Shaip as your Trustworthy Audio Annotation Partner

People

Dedicated and trained teams:

- 30,000+ collaborators for Data Creation, Labeling & QA

- Credentialed Project Management Team

- Experienced Product Development Team

- Talent Pool Sourcing & Onboarding Team

Process

Highest process efficiency is assured with:

- Robust 6 Sigma Stage-Gate Process

- A dedicated team of 6 Sigma black belts – Key process owners & Quality compliance

- Continuous Improvement & Feedback Loop

Platform

The patented platform offers benefits:

- Web-based end-to-end platform

- Impeccable Quality

- Faster TAT

- Seamless Delivery

Why you should outsource Audio Data Labeling / Annotation

Dedicate Team

It is estimated that data scientists spend over 80% of their time in data cleaning and data preparation. With outsourcing, your team of data scientists can focus on continuing the development of robust algorithms leaving the tedious part of the job, to us.

Better Quality

Dedicated domain experts, who annotate day-in and day-out will – any day – do a superior job when compared to a team, that needs to accommodate annotation tasks in their busy schedules. Needless to say, it results in better output.

Scalability

Even an average Machine Learning (ML) model would require labeling large chunks of data, which requires companies to pull in resources from other teams. With data annotation consultants like us, we offer domain experts who dedicatedly work on your projects and can easily scale operations as your business grows.

Eliminate Internal Bias

The reason why AI models fail, is because teams working on data collection and annotation unintentionally introduce bias, skewing the end result and affecting accuracy. However, the data annotation vendor does a better job at annotating the data for improved accuracy by eliminating assumptions and bias.

Services Offered

Expert image data collection isn’t all-hands-on-deck for comprehensive AI setups. At Shaip, you can even consider the following services to make models way more widespread than usual:

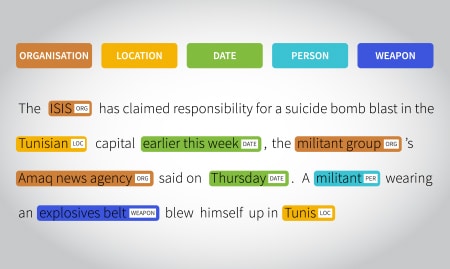

Text Annotation Services

We specialize in making textual data training ready by annotating exhaustive datasets, using entity annotation, text classification, sentiment annotation, and other relevant tools.

Image Annotation Services

We take pride in labeling, segmented image datasets to train discerning computer vision models. Some of the relevant techniques include boundary recognition & image classification.

Video Annotation Services

Shaip offers high-end video labeling services for training Computer Vision models.

The aim here is to make datasets usable with tools like pattern recognition,object detection, and more.

Recommended Resources

Buyer’s Guide

Buyer’s Guide for Conversational AI

The chatbot you conversed with runs on an advanced conversational AI system that is trained, tested, and built using tons of speech recognition datasets

Offerings

Speech Data Collection Services for your AIs

Shaip offers end-to-end speech/audio data collection services in over 150+ languages to enable voice-enabled technologies to cater to a diverse set of audiences across the globe.

Blog

What is Audio / Speech Annotation With Example

We have all asked Alexa (or other voice assistants) some open-ended questions. Alexa, is the nearest pizza place open? Alexa, which restaurant in my location offers free delivery to my address?

Featured Clients

Empowering teams to build world-leading AI products.

Get Audio Annotation Experts On-board.

Now prepare well-researched, granular, segmented, and multi-labeled audio datasets for intelligent AIs

Frequently Asked Questions (FAQ)

1. What is audio annotation, and why is it important for NLP?

Audio annotation labels and segments audio data to train AI and NLP models. It helps systems understand speech, sounds, and context for applications like voice assistants and chatbots.

2. Why is audio annotation crucial for training voice assistants like Alexa or Siri?

Audio annotation helps voice assistants understand user queries, tone, and intent, enabling accurate and responsive interactions.

3. How does speaker diarization help in call center automation?

Speaker diarization separates speakers in audio files, helping call centers analyze conversations and improve customer service.

4. What is phonetic transcription, and how is it different from regular transcription?

Phonetic transcription captures how words are pronounced using symbols, while regular transcription converts speech into text without pronunciation details.

5. How does audio annotation improve environmental sound classification?

It categorizes sounds like sirens or footsteps, helping AI systems recognize and interpret environmental noises for security and maintenance.

6. What types of audio annotation does Shaip offer?

Shaip offers phonetic transcription, speaker diarization, NLU, speech labeling, multi-label annotation, and audio classification.

7. How does Shaip ensure quality and accuracy in audio annotation services?

Shaip uses expert annotators, advanced tools, and strict quality checks to deliver accurate and unbiased audio datasets.

8. Why is multi-label annotation important in training AI for overlapping audio sources?

Multi-label annotation helps AI identify and classify multiple sounds in one audio file, essential for complex applications.

9. How does audio annotation enhance AI-powered speech recognition systems?

It provides labeled data to help systems identify words, accents, and intent, improving transcription and understanding.

10. What are the challenges in annotating multilingual audio datasets?

Challenges include handling accents and dialects. Shaip manages this with global linguists and scalable processes.

11. How do companies handle large-scale audio annotation projects?

Shaip uses scalable solutions, expert teams, and advanced platforms to deliver large projects quickly and accurately.

12. What are the costs and benefits of outsourcing audio annotation services?

Outsourcing saves time, ensures expert annotation, and provides high-quality data for better AI performance.

13. Why should businesses choose Shaip for audio annotation services?

Shaip offers accurate multilingual datasets, scalable solutions, and expertise to improve AI systems like virtual assistants and security applications.