The need and demand for user-generated data in today’s dynamic business world is continuously increasing, with content moderation, too, gaining sufficient attention.

Whether it is social media posts, product reviews, or blog comments, user-generated data generally offers a more engaging and authentic way of brand promotions. Unfortunately, this user-generated data is not always of the highest standards and brings forth the challenge of effective content moderation.

AI content moderation ensures that your content aligns with the company’s intended goals and fosters a safe online environment for users. So, let us look at the diverse landscape of content moderation and explore its types and role in optimizing content for brands.

AI Content Moderation: An Insightful Overview

AI Content moderation is an effective digital process that leverages AI technologies to monitor, filter, and manage user-generated content on various digital platforms.

Content moderation aims to ensure that the content posted by users complies with community standards, platform guidelines, and legal regulations.

Content Moderation involves screening and analyzing text, images, and videos to identify and address areas of concern.

The process of content moderation solves multiple purposes, such as

- Filtering out inappropriate or harmful content

- Minimizing legal risks

- Maintaining brand safety

- Improving speed, consistency, and business scalability

- Enhancing user experience

Let us delve a little deeper and explore Content Moderation more vividly with its different types and its role in them:

[Also Read: Understanding Automated Content Moderation]

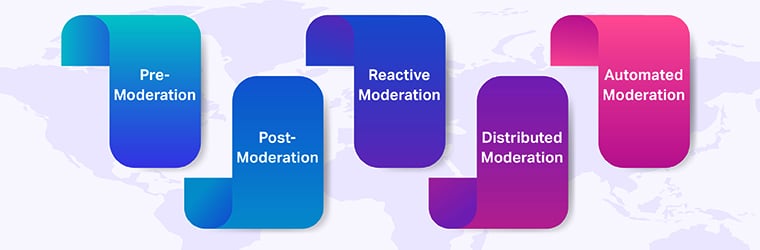

A Glimpse into the Content Moderation Journey: 5 Key Stages

Here are the five key stages the data goes through before getting in the right shape and form:

Pre-Moderation

It involves reviewing and approving content before it is published on a platform. This method offers tight control over the content and ensures only content meeting specific business guidelines goes live. Though this method is highly effective in generating high content quality, it can slow down content distribution as it requires consistent human review and approval.

Real World Example:

Amazon is a popular brand that uses content moderation to ensure the appropriateness of its content. As Amazon regularly caters to thousands of product images and videos regularly, its Amazon Rekognition tool ensures the content gets validated. It uses the pre-moderation method to detect explicit content by over 80% that could harm the company’s reputation.

Post-Moderation

In contrast to Pre-Moderation, Post-Moderation allows users to submit content in real time without needing prior review. This means that the content is immediately deployed on the live server but is subjected to further review. This approach enables content to be distributed more swiftly. However, post-moderation also poses the risk of inappropriate or harmful content publishing.

Real World Example:

YouTube is a classic example of this. It allows its users to post and publish the content first. Later, it reviews the videos and reports them for inappropriateness or copyright issues.

Reactive Moderation

It is a great technique incorporated by some online communities to flag any inappropriate content. Reactive moderation is generally used with the pre- or post-moderation method and relies on user reports or automated flagging systems to identify and review content violations. The online communities leverage multiple moderators who assess and take necessary actions to eliminate the identified inappropriate data.

Real World Example:

Facebook uses the reactive moderation method to screen the content present on its platform. It allows its users to flag any inappropriate content, and based on the collective reviews, it further implements the required actions. Recently, Facebook has developed an AI for content moderation that delivers over 90% success rate in flagging content.

Distributed Moderation

This method relies on user participation to rate the content and determine whether it is right for the brand or not. The users vote on any suggested choice, and the average rating decides which content gets posted.

The only downside to using Distributed Moderation is that incorporating this mechanism into brands is highly challenging. Trusting users to moderate content carries a number of branding and legal risks.

Real World Example:

Wikipedia utilizes the distribution moderation mechanism to maintain accuracy and content quality. By incorporating various editors and administrators, team Wikipedia ensures that only the right data gets uploaded to the website.

Automated Moderation

It is a simple yet effective technique that uses advanced filters to catch words from a list and further act on preset rules to filter out content. The algorithms used in the process identify patterns that usually generate potentially harmful content. This method efficiently posts fine-tuned content that can generate higher engagement and website traffic.

Real World Example

Automated Moderation is used by various gaming platforms, including Playstation and Xbox. These platforms incorporate automated methods that detect and punish players who are violating game rules or using cheat codes.

AI-Powered Use Cases in Content Moderation

Content moderation allows the removal of the following types of data:

- Explicit 18+ Content: It is sexually explicit content that includes nudity, vulgarity, or sexual acts.

- Aggressive Content: It is content that poses threats, harassment, or contains harmful language. It may also include targeting individuals or groups and often violating community guidelines.

- Content with Inappropriate Language: It is content that contains offensive, vulgar, or inappropriate language, such as swear words and slurs that may harm someone’s sentiments.

- Deceptive or False Content: It is the false information intentionally spread to misinform or manipulate audiences.

AI Content Moderation ensures that all these content types are fetched and eliminated to provide more accurate and reliable content.

Tackling Data Diversity Using Content Moderation

Content is present in varied types and forms in digital media. Hence, each type requires a specialized approach of moderation to acquire optimal results:

[Also Read: 5 Types of Content Moderation and How to Scale Using AI?]

Text Data

For the text data, content moderation is done using the NLP algorithms. These algorithms use sentiment analysis to identify the tone of a given content. They analyze the written content and detect any spam or bad content.

Additionally, it also uses Entity Recognition, which leverages company demographics to predict the fakeness of content. Based on the identified patterns, the content is flagged, safe, or unsafe, and can be further posted.

Voice Data

Voice content moderation has gained immense value recently with the rise of voice assistants and voice-activated devices. To successfully moderate the voice content, a mechanism known as voice analysis is leveraged.

Voice analysis is powered by AI and provides:

- Translation of voice into text.

- Sentiment analysis of the content.

- Interpretation of the voice tone.

Image Data

When it comes to the moderation of image content, techniques such as text classification, image processing, and vision-based search come in handy. These powerful techniques thoroughly analyze the images and detect any harmful content in the image. The image is sent for publishing if it contains no harmful content or is flagged off in the alternative case.

Video Data

Video moderation requires the analysis of audio, video frames, and text within videos. To do so, it utilizes the same mechanisms mentioned above for text, image, and voice. Video moderation ensures that inappropriate content is swiftly identified and removed to build a safe online environment.

Conclusion

AI-driven content moderation is a potent tool for maintaining content quality and safety across various data types. As user-generated content continues to grow, platforms must adapt to new and effective moderation strategies that can scale up their business credibility and growth. You may get in touch with our Shaip team if you are interested in Content Moderation for your business.