A successful machine learning model starts with high-quality training data. But one of the most common questions teams ask at the start of an AI project is: how much training data is enough?

The honest answer is that there is no fixed number that works for every project. The amount of data you need depends on the task, the complexity of the model, the number of classes, data quality, label accuracy, and the performance standard you want to reach.

In practice, the best way to estimate training data requirements is to begin with a representative sample, train on progressively larger subsets, and measure when model performance starts to level off. This helps teams make informed decisions about cost, timeline, annotation effort, and expected outcomes.

In this blog, we break down the main factors that affect training data volume, explain how to estimate requirements in practice, and show what to do when you need more data without delaying your AI roadmap.

Why Training Data Matters

Training data is the foundation of every machine learning system. No matter how advanced the algorithm is, it can only learn patterns that are present in the data used to train it. If the data is incomplete, biased, noisy, or too limited, the model will struggle to generalize in the real world.

Strong training data helps teams:

- improve model accuracy

- reduce bias and blind spots

- estimate project cost and feasibility more accurately

- reduce rework during model iteration

- build more reliable validation and testing pipelines

This is why data collection, cleaning, labeling, and validation often take up the largest share of effort in AI projects. If the data is weak, predictions will be weak too.

There Is No Universal Number — But There Is a Practical Way to Estimate It

Many articles try to answer this question with a single number. That is rarely useful.

A model for simple binary classification may perform well with a relatively small dataset, while a large language model fine-tuning workflow or a computer vision system for edge cases may require significantly more examples. The better question is not “what is the magic number?” but:

What is the minimum amount of high-quality, representative training data needed to reach the target performance for this use case?

A practical way to answer this is to use learning curves: train the model on increasing amounts of data and observe how much performance improves with each step. When improvement starts flattening, you have a much clearer signal of whether collecting more data is worth the investment. This approach is commonly recommended in practical ML workflows.

7 Factors That Determine How Much Training Data You Need

1. Model Type: Classical ML vs Deep Learning

The type of model has a major impact on data requirements. Classical machine learning models such as logistic regression, decision trees, or gradient boosting can often perform well on smaller structured datasets, especially when features are well engineered.

Deep learning models generally require more data because they learn features automatically and contain many more parameters. For image, audio, and language tasks, deep models usually benefit significantly from additional data volume and diversity.

2. Supervised vs Unsupervised Learning

Supervised learning requires labeled data, which is often harder and more expensive to collect. If your model needs humans to annotate images, transcribe audio, tag entities, or classify documents, the data requirement must account for both quantity and labeling effort.

Unsupervised learning does not require labeled data, but it still benefits from large, representative datasets. Even without labels, the model needs enough coverage to detect meaningful patterns and structure.

3. Task Complexity and Number of Classes

A simple binary classification task is very different from a multi-class medical imaging problem or a multilingual speech recognition system.

As task complexity increases, training data requirements usually rise because the model must learn:

- more classes

- finer distinctions between categories

- more edge cases

- more contextual variability

For example, distinguishing “cat” vs “dog” is far easier than identifying dozens of visually similar product defects across lighting conditions, camera angles, and backgrounds.

4. Data Quality and Label Accuracy

More data is not always better if the quality is poor.

A smaller dataset with accurate labels, balanced representation, and consistent formatting can outperform a larger but noisy dataset. Low-quality labels, duplicate records, weak class definitions, missing metadata, and inconsistent annotation guidelines all reduce model performance.

Before collecting more data, teams should ask:

- Are labels consistent?

- Are we covering all important user scenarios?

- Is the data representative of production conditions?

- Are train, validation, and test sets properly separated?

For many projects, improving data quality produces faster gains than simply increasing data volume.

5. Diversity, Coverage, and Class Balance

A model should learn from the real-world variability it will face after deployment. That means the dataset should reflect different scenarios, user groups, device types, accents, environments, document formats, image conditions, and edge cases.

If one class or segment is underrepresented, the model may appear accurate overall while failing badly on critical subgroups. This is why diversity and class balance matter just as much as raw size.

In many cases, the question is not “Do we have enough data?” but “Do we have enough of the right data?”

6. Transfer Learning and Pre-trained Models

If you are starting from a pre-trained model, you may need far less task-specific data than if you train from scratch.

This is especially true for:

- image classification using vision backbones

- NLP tasks using transformer-based models

- speech models adapted to a new accent or domain

- domain adaptation workflows

Transfer learning allows teams to reuse knowledge learned on large existing datasets, which can dramatically reduce annotation burden. The original article already covered this well; it should stay, but with clearer examples.

7. Validation Strategy and Target Performance

The amount of data you need is also shaped by how good the model needs to be.

A prototype may work with modest amounts of data. A production model in healthcare, finance, insurance, automotive, or compliance-heavy environments will require stronger coverage, cleaner labels, better validation, and more reliable performance across edge cases. The stricter the acceptable error rate, the more robust your dataset must be.

How to Estimate Training Data Requirements in Practice

Instead of guessing, use a structured estimation process.

Step 1: Start with a Representative Pilot Dataset

Collect a smaller but representative sample of the problem space. Include important classes, formats, user types, and real-world variations.

Step 2: Split the Data Properly

Create separate training, validation, and test sets. Make sure the test set reflects production conditions and is never used during training.

Step 3: Train on Progressively Larger Samples

Train the model using increasing portions of the dataset, such as 10%, 20%, 40%, 60%, 80%, and 100%.

Step 4: Plot a Learning Curve

Track performance metrics such as accuracy, F1 score, recall, precision, or task-specific quality measures as dataset size increases.

Step 5: Look for the Plateau

If model performance improves sharply with more data, you probably need more. If improvements flatten, your bottleneck may no longer be volume — it may be label quality, feature design, model choice, or class imbalance.

Step 6: Review Segment-Level Performance

Check how the model performs not just overall, but across important classes and edge cases. A model may plateau overall while still underperforming badly on minority segments. This method gives stakeholders a more realistic estimate of how much additional data is worth collecting.

How to Know When You Have Enough Training Data

You likely have enough data when:

- model performance improves only marginally as more data is added

- validation results are stable across multiple runs or folds

- important classes perform acceptably, not just the majority class

- performance holds on a clean, untouched test set

- the remaining errors are caused more by label noise or ambiguity than by lack of examples

You likely need more data when:

- the learning curve is still climbing

- rare classes perform poorly

- the model fails on common real-world variations

- results fluctuate heavily between runs

- test performance drops sharply compared to validation performance

How To Reduce Training Data Requirements

Sometimes the challenge is not model design — it is data scarcity, budget, or time-to-market. In those cases, teams can reduce their dependence on massive data volumes with the right strategies.

Data Augmentation

Data augmentation creates new training examples from existing data. In computer vision, this may include cropping, rotating, flipping, or adjusting brightness. In NLP and speech, augmentation must be more careful, but controlled transformations can still help.

Used correctly, augmentation improves robustness and helps models generalize better. Used poorly, it can introduce noise or unrealistic examples.

Transfer Learning

Transfer learning lets you adapt an existing model for a new task instead of training from zero. This is often one of the most effective ways to reduce training data requirements.

Pre-trained Models

Pre-trained models such as BERT-like NLP models or established vision backbones can provide strong starting points. Rather than learning everything from scratch, the model begins with useful prior knowledge.

Active Learning

If labeling is expensive, active learning can help prioritize the most informative examples first. This improves annotation efficiency and can reduce the number of labels needed to reach useful performance.

Synthetic Data

Synthetic data can be useful when real-world data is scarce, sensitive, or hard to collect, especially in areas such as healthcare, finance, autonomous systems, and edge-case simulation. But it should supplement — not blindly replace — real, representative data.

Real-world Examples Of Machine Learning Projects With Minimal Datasets

While it may sound impossible that some ambitious machine learning projects can be executed with minimal raw materials, some cases are astoundingly true. Prepare to be amazed.

| Kaggle Report | Healthcare | Clinical Oncology |

| A Kaggle survey reveals that over 70% of the machine-learning projects were completed with less than 10,000 samples. | With only 500 images, an MIT team trained a model to detect diabetic neuropathy in medical images from eye scans. | Continuing the example with healthcare, a Stanford University team managed to develop a model to detect skin cancer with only 1000 images. |

Making Educated Guesses

There is no magic number regarding the minimum amount of data required, but there are a few rules of thumb that you can use to arrive at a rational number.

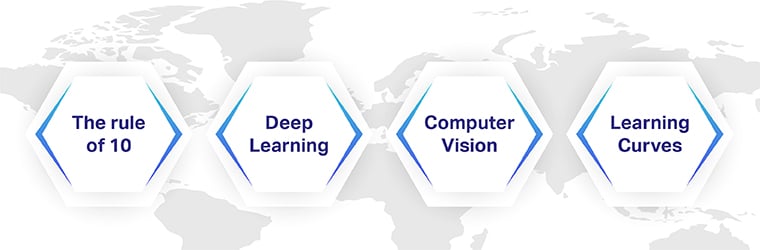

The rule of 10

As a rule of thumb, to develop an efficient AI model, the number of training datasets required should be ten times more than each model parameter, also called degrees of freedom. The ’10’ times rules aim to limit the variability and increase the diversity of data. As such, this rule of thumb can help you get your project started by giving you a basic idea about the required quantity of datasets.

Deep Learning

Deep learning methods help develop high-quality models if more data is provided to the system. It is generally accepted that having 5000 labeled images per category should be enough for creating a deep learning algorithm that can work on par with humans. To develop exceptionally complex models, at least a minimum of 10 million labeled items are required.

Computer Vision

If you are using deep learning for image classification, there is a consensus that a dataset of 1000 labeled images for each class is a fair number.

Learning Curves

Learning curves are used to demonstrate the machine learning algorithm performance against data quantity. By having the model skill on the Y-axis and the training dataset on the X-axis, it is possible to understand how the size of the data affects the outcome of the project.

The Cost of Having Too Little Data

When teams train on limited, narrow, or biased datasets, the model may appear promising in development but fail in production.

Too little data can lead to:

- overfitting

- weak generalization

- unstable predictions

- poor performance on minority classes

- higher bias risk

- more iteration time later

In other words, the limitations in your training data often become the limitations of your product.

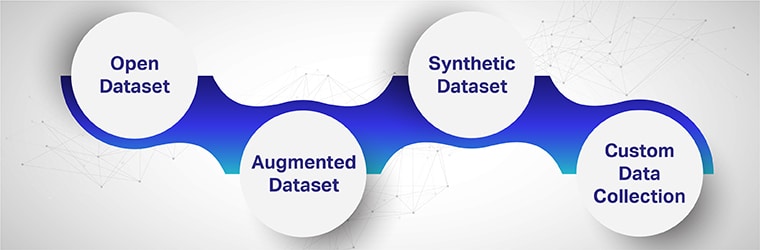

What to do if you Need more Datasets

When you identify a data gap, the solution is not always “collect everything.” The smarter approach is to expand the dataset strategically.

1. Use Open Datasets Carefully

Open datasets can help for prototyping or benchmarking, but they are not always suitable for production use. Teams should review provenance, consent, quality, relevance, and coverage before depending on them.

2. Collect Custom Data for Your Use Case

If the target environment is highly specific, custom data collection is often the best option. This is especially true for domain-heavy workflows such as healthcare AI, conversational AI, computer vision edge cases, and multilingual systems.

3. Improve Existing Data Through Annotation

Many teams already have raw data but lack structure. Annotation, relabeling, taxonomy cleanup, and quality review can unlock value faster than collecting brand-new datasets.

4. Rebalance Underrepresented Classes

If performance is weak on specific categories, focus collection and labeling on those high-impact gaps rather than expanding the whole dataset evenly.

5. Add Synthetic or Augmented Data Where Appropriate

When real data is limited or sensitive, synthetic and augmented data can help improve coverage — but it should be validated carefully against real-world distributions.

6. Work with a Specialized Data Partner

For teams building production AI at scale, partnering with a provider that can collect, license, annotate, validate, and govern high-quality training data can significantly reduce project risk and speed up deployment.

Final Thoughts

There is no magic number for training data in machine learning. The right amount depends on the use case, model type, data quality, class diversity, validation strategy, and target performance.

The most effective way to estimate training data needs is to begin with a representative sample, measure performance using learning curves, and expand the dataset strategically based on where the model still fails.

For some projects, a modest, high-quality dataset may be enough. For others, especially high-stakes or highly variable environments, success depends on large, carefully curated, and well-annotated datasets.

What matters most is not simply having more data — but having the right data.

Do you have a great project in mind but are waiting for tailormade datasets to train your models or struggling to get the right outcome from your project? We offer extensive training datasets for a variety of project needs. Leverage the potential of Shaip by talking to one of our data scientists today and understanding how we have delivered high-performing, quality datasets for clients in the past.

FAQs

How much training data is enough for machine learning?

There is no fixed number. The right amount depends on the task, model complexity, label quality, class balance, and target accuracy. The most reliable way to estimate it is to train on increasing subsets and measure performance improvements.

How do I know if I need more training data?

You likely need more training data if model performance continues improving as data size increases, if rare classes perform poorly, or if results are unstable across runs.

Can transfer learning reduce training data requirements?

Yes. Transfer learning allows models to reuse knowledge from previously trained systems, which can significantly reduce the amount of task-specific labeled data needed.

Is more data always better for machine learning?

Not necessarily. More low-quality or poorly labeled data can hurt performance. In many cases, improving data quality, balance, and representativeness is more valuable than simply increasing volume.

How much data do I need for deep learning?

Deep learning models typically require more data than classical machine learning models, especially for image, speech, and language tasks. However, pre-trained models and transfer learning can reduce this requirement.